SAN JOSE, California — Nvidia officially released Dynamo 1.0 on Sunday, March 16, at its annual GTC conference, positioning the free, open-source software as the first distributed “operating system” for AI inference factories — a platform already running inside the infrastructure of AWS, Microsoft Azure, Google Cloud, and Oracle Cloud Infrastructure, according to the company’s official announcement.

Dynamo 1.0 Reshapes AI Inference Economics

The performance headline is hard to ignore. In independent benchmarks by SemiAnalysis InferenceX running DeepSeek R1-0528, Dynamo delivered up to a 7x increase in inference requests served on Nvidia Blackwell GPUs — with zero new hardware required. That math rewrites the return-on-investment for every data center operator that has already committed billions to Blackwell deployments.

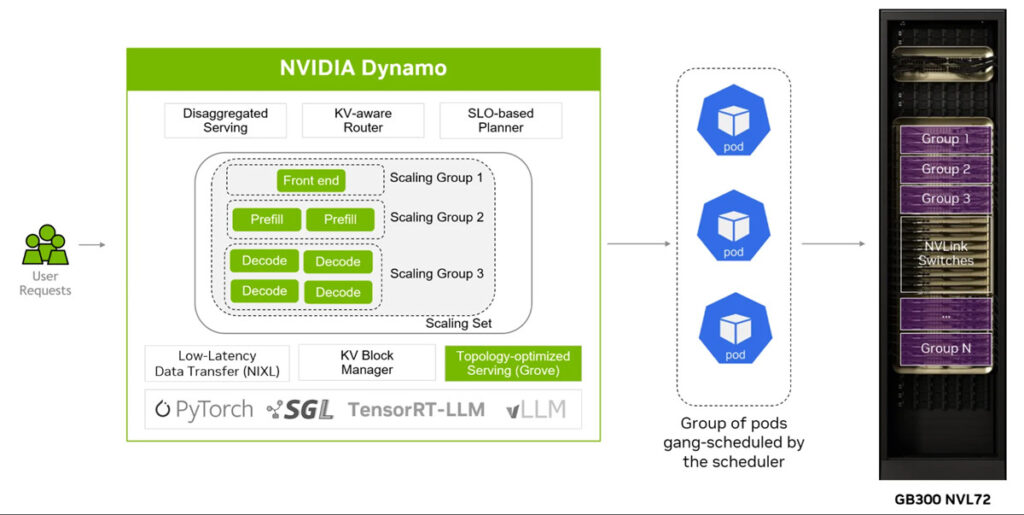

Data reviewed from the Dynamo technical release indicates the gains stem from a disaggregated serving architecture that separates prefill and decode phases across different GPUs, combined with intelligent KV cache routing that directs new requests to processors already holding relevant cached data — eliminating redundant computation at scale.

What Dynamo 1.0 Delivers on Blackwell Hardware

Three new capabilities define the 1.0 production release:

- ModelExpress cuts model replica startup time by up to 7x for large mixture-of-experts models like DeepSeek v3, streaming weights over NVLink instead of forcing each GPU node to download independently

- Native video-generation support with integrations for FastVideo, SGLang Diffusion, and vLLM-Omni, targeting compute-heavy video workloads at high resolution

- Multimodal optimization via a disaggregated encode/prefill/decode pipeline and an embedding cache that skips repeated GPU encoding — delivering 30% faster time-to-first-token on the Qwen3-VL-30B model

The Fresh Angle No One Is Leading With

Here is what the wire coverage buries: the 7x Blackwell figure is benchmark-specific — measured on GB200 NVL72 systems running a single model architecture, DeepSeek R1-0528, under SemiAnalysis InferenceX conditions. Operators running older Hopper GPU infrastructure face a different reality. DigitalOcean, which adopted Dynamo for its Kubernetes-based GPU platform, confirmed up to 3x lower inference cost on Hopper GPUs — real, but materially different from the flagship claim. The distinction matters for the thousands of enterprises not yet on Blackwell who may read the headline and assume equal gains.

Global Enterprise Footprint Already Established

The adoption list, documented in Nvidia’s official release and examined by this publication, spans significantly beyond hyperscalers:

| Sector | Adopters |

|---|---|

| Cloud Providers | AWS, Microsoft Azure, Google Cloud, Oracle Cloud, CoreWeave, DigitalOcean, Vultr |

| AI Platforms | Together AI, Perplexity, Cursor |

| Global Enterprises | PayPal, Pinterest, ByteDance, AstraZeneca, BlackRock, Instacart, Meituan, SoftBank |

Vipul Ved Prakash, CEO of Together AI, said Dynamo enables “accelerated, cost-effective inference for large-scale production workloads,” according to the official announcement. Pinterest CTO Matt Madrigal said the company is expanding AI experiences using the platform.

The Software Strategy Behind the Hardware Giant

Chirag Dekate, a Gartner analyst specializing in agentic AI infrastructure, offered the sharpest framing of what Nvidia is actually doing here. “Inference is becoming a software orchestration problem,” Dekate said. “By open-sourcing Dynamo, Nvidia is making a classic standards play: lower adoption friction, attract ecosystem partners and turn its preferred runtime model into the market’s default operating model”.

That strategy is deliberate. By releasing Dynamo as free, open-source software, Nvidia builds a dependency layer between its Blackwell hardware and every application running inference on top — making the GPUs harder to replace without also replacing the orchestration layer.

The company contributed TensorRT-LLM CUDA kernels to the FlashInfer project as part of the same release, embedding Nvidia-optimized code directly into community-maintained open-source frameworks including LangChain, vLLM, SGLang, and llm-d.

One Detail Still Unconfirmed

Nvidia has not published a granular breakdown of Dynamo’s performance benchmarks across its full GPU portfolio — including how H100 and H200 deployments fare compared to the flagship GB200 NVL72 systems that produced the 7x headline figure. A request for comment on that data had not received a response at the time of publication.

Dynamo 1.0 is available on GitHub now for developers worldwide. Nvidia’s next confirmed roadmap targets reinforcement learning workloads and expanded multimodal capabilities, with no announced timeline for those additions.