CAMBRIDGE, Mass. — Engineers at the Massachusetts Institute of Technology have developed dust-sized porous silicon chips that perform mathematical calculations using waste heat instead of electricity, achieving more than 99 percent accuracy in matrix multiplication operations fundamental to machine learning, according to research published January 29 in Physical Review Applied.

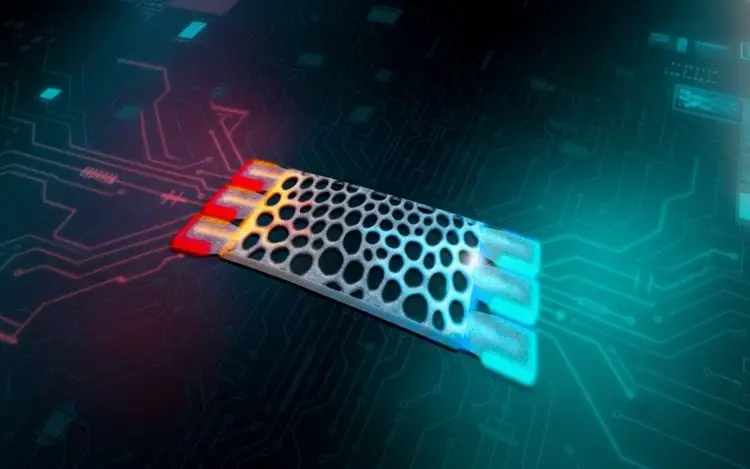

The microscopic structures encode input data as specific temperatures and channel heat flow through specially designed silicon frameworks to complete computations. Output is measured by thermal energy collected at a fixed-temperature endpoint, marking what researchers call a shift toward “thermal analog computing” that transforms unwanted heat into usable information.

From Liability to Asset

Most electronic devices generate significant heat during operation, requiring active cooling systems and consuming additional energy to manage thermal output. The MIT team reversed this paradigm by treating heat as the computational signal rather than a problem requiring mitigation.

“Most of the time, when you are performing computations in an electronic device, heat is the waste product. You often want to get rid of as much heat as you can,” said Caio Silva, an undergraduate student in MIT’s Department of Physics and lead author of the study. “But here, we’ve taken the opposite approach by using heat as a form of information itself and showing that computing with heat is possible.”

The researchers demonstrated matrix-vector multiplication—a core mathematical operation in large language models and neural networks—with accuracy exceeding 99 percent on test matrices containing two to three columns.

Inverse Design Breakthrough

The advancement relies on software the team previously developed that uses “inverse design” algorithms to automatically generate material structures with specific thermal properties. Rather than engineers manually designing geometries, the system starts with desired functionality and iteratively optimizes structure until performance targets are met.

Giuseppe Romano, a research scientist at MIT’s Institute for Soldier Nanotechnologies and member of the MIT-IBM Watson AI Lab, explained the approach eliminates human design limitations. The computational method employs automatic differentiation using JAX programming framework combined with a custom differentiable thermal solver to optimize porous silicon configurations that encode matrix coefficients through their geometry.

Structures are fabricated as rectangular grids with each pixel fine-tuned until heat flowing through the silicon performs the intended multiplication. Researchers adjusted thickness to expand the variety of matrices that could be represented, since thicker structures conduct heat differently than thin ones.

Handling Negative Numbers

The team encountered a fundamental challenge when representing matrices containing negative values, since temperature cannot be negative. They solved this by splitting target matrices into separate positive and negative components, each encoded in independently optimized silicon structures containing only positive entries. Subtracting outputs at a later processing stage allows computation of negative matrix values.

Scalability Barriers Remain

While tests on small matrices demonstrated high accuracy, substantial obstacles block near-term application to deep learning systems that process massive datasets. Scaling the approach to handle computations required by modern AI models would demand tiling millions of structures together, creating bandwidth and integration challenges researchers have not yet solved.

Accuracy degrades as matrix complexity increases, and current designs have limited information throughput compared to conventional digital processors. The thermal computing method cannot yet compete with GPUs and specialized tensor processing units that dominate AI hardware.

Immediate Applications in Thermal Management

Despite scalability limitations for machine learning, the structures show promise for tasks where electronics already contend with heat generation. Romano noted the devices could detect localized heat sources or temperature gradients in microelectronics without consuming additional power or requiring multiple sensor arrays that occupy chip space.

“Temperature gradients can cause thermal expansion and damage a circuit or even cause an entire device to fail,” Romano said. “If we have a localized heat source where we don’t want a heat source, it means we have a problem. We could directly detect such heat sources with these structures, and we can just plug them in without needing any digital components.”

Applications include identifying failing components through abnormal heat signatures, monitoring thermal distribution across processors, and implementing passive thermal management that requires no external energy input.

Next Steps Toward Sequential Operations

The research team plans to design structures capable of sequential operations where computation outputs feed directly into subsequent calculations, mirroring information flow in neural networks and other machine learning architectures. This would move beyond single matrix multiplications toward chained operations that form complete computational pipelines.

The advance represents early-stage exploration of alternative computing paradigms that could supplement rather than replace conventional electronics in specialized applications where waste heat is abundant and energy efficiency is critical.